It’s not that GUI build tools are bad, per se, but you have to watch out for tools that use too much magic, and tools that don’t let you version control your build with the same zealousness that you use for the source code of the system you are building.

Let’s start with the 2nd type of tool.

Many open source .NET Core projects use AppVeyor to build, test, and deploy applications and NuGet packages. When defining an AppVeyor build, you have a choice of using a GUI, or a configuration file.

With the GUI, you can point and click your way to a deployment. This is a perfectly acceptable approach for some projects, but lacks some of the rigor you might need for larger projects or teams.

You can also define your build by checking a YAML configuration file into the root of your repository. Here’s an excerpt:

Think about the advantages of the source-controlled YAML approach:

You can version the build with the rest of your software

You can use standard diff tools to see what has changed

You can see who changed the build

You can copy and share your build with other projects.

Also note that in the screen shot above, the YAML file calls out to a build script – build.ps1. I believe you should encapsulate as many build steps as possible into a package you can run on the build server and on a development machine. Doing so allows you to make changes, test changes, and troubleshoot build steps quickly. You can use MSBuild, Powershell, Cake, or any technology that makes builds easier. Integration points, like publishing to NuGet, will stay as configurable steps in the build platform.

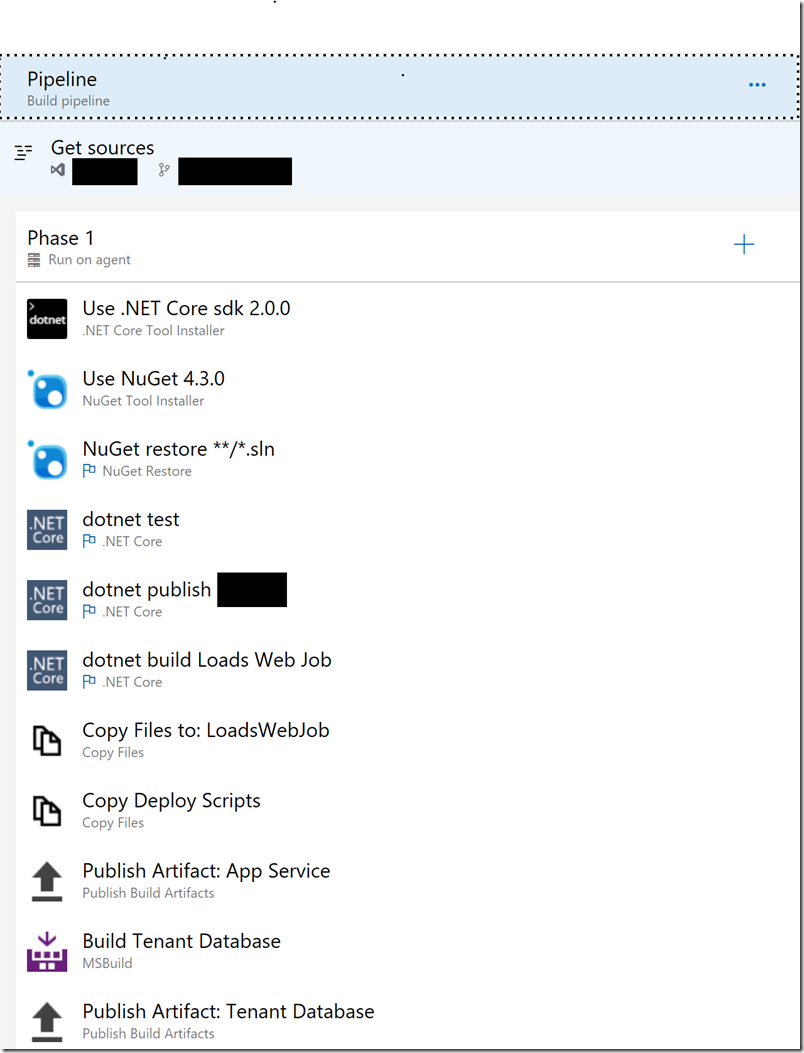

Azure Pipelines also offers GUI editors for authoring build and release pipelines.

Fortunately, a YAML option arrived recently and is getting better with every sprint.

Magic tools are tools like Visual Studio. For over 20 years, Visual Studio has met the goal of making complex tasks simple. However, any time you want to perform a more complex task, the simplicity and hidden complexity can get in the way.

For example, what does the “Build” command in Visual Studio do, exactly? Call MSBuild? Which MSBuild? I have 10 copies of msbuild.exe on a relatively fresh machine. What parameters are passed? All the details are hidden and there’s nothing Visual Studio gives me as a starting point if I want to create a build pipeline on some other platform.

Another example of magic is the Docker support in Visual Studio. It is nice that I can right-click an ASP.NET Core project and say “Add Docker Support”. Seconds later I’ll be able to build an image, and not only run my project in a container, but debug through code executing in a container.

But, try to build or run the same image outside of Visual Studio and you’ll discover just how much context is hidden by tooling. You have to dig around in the build output to discover some of the parameters, and then you'll realize VS is mapping user secrets and setting up a different environment for the container. You might also notice the quiet installation of Microsoft.VisualStudio.Azure.Containers.Tools.Targets into your project, but you won't find any documentation or source code for thus NuGet package.

I think it is tooling like the Docker tooling that gives VS Code good traction. VS Code relies on configuration files and scripts that can make complex tasks simpler without making customizations and understanding inaccessible. Want to make a simple change to the Visual Studio approach? Don’t tell me you are going to edit the IL in Microsoft’s assembly! VS Code is to Visual Studio what YAML files are to GUI editors.

To wrap up this post in a single sentence: build and release definitions need source control, too.

OdeToCode by K. Scott Allen

OdeToCode by K. Scott Allen