"Cloud Computing Governance" sounds like a talk I’d want to attend after lunch when I need an afternoon nap, but ever since the CFO walked into my office waving Azure invoices in the air, the topic is on my mind.

It seems when you turn several teams of software developers loose in the cloud, you typically set the high-level priorities like so:

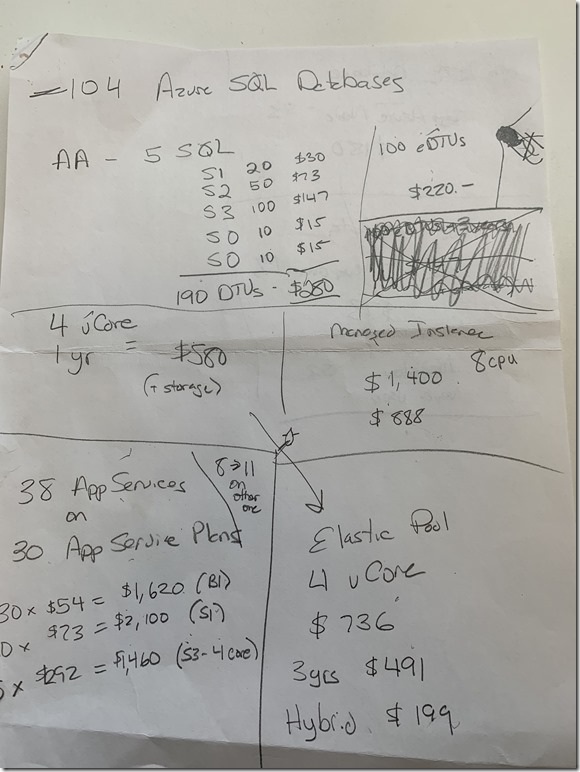

Missing from the list is the priority to "make the monthly cost as cheap as possible", but cost is easy to overlook when the focus is on security, quality, and scalability. After the CFO left, I reviewed what was happening across a dozen Azure subscriptions and I started to make some notes:

Yes, there’s 104 un-pooled Azure SQL instances, and 38 app services running on 30 app service plans.

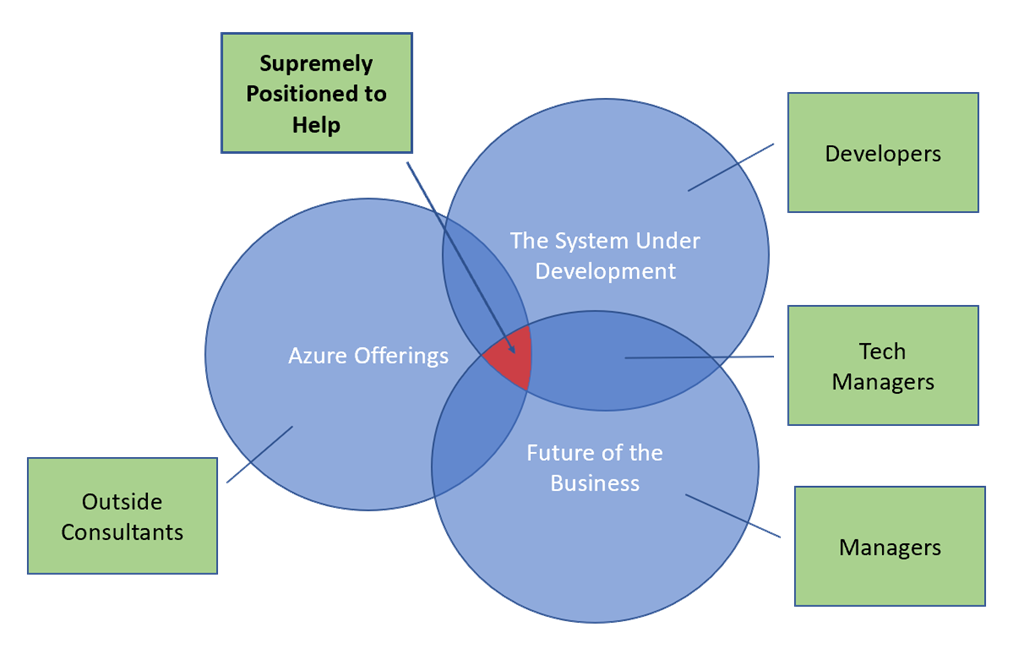

There are countless people in the world who want to sell tools and consulting services to help a company reduce costs in the cloud. To me, outside consultants start with only 1 of the 3 areas of expertise needed to optimize cost. The three areas are:

In Venn diagram form:

Let’s dig into the details of where these three areas of knowledge come into play.

Let’s say your application needs data from dozens of large customers. How will the data move into Azure? An outside consultant can’t just say “Event Hubs” or “Data Factory” without knowing some details. Is the data size measured in GB or TB? How often does the data move? Where does the data live at the customer? What needs to happen with the data in the cloud? Will any of these answers change in a year?

Without a good understanding of the Azure offerings, a tech person often answers with the technology they already know. A SQL oriented developer, for example, will use Data Factory to pump data into an Azure SQL database. But, this isn’t the most cost effective answer if the data requires heavy duty processing after delivery, because Azure SQL instances are priced for line of business transactions that need atomicity, reliability, redundancy, high availability, and automatic backups, not hardcore compute and I/O.

But let’s say the answer is SQL Server. Now what?

Now a consultant needs to dig deeper to find out the best approach to SQL Server in the cloud. There are three broad approaches:

Option #1 is best for lift and shift solutions, but there is no need to take on the responsibility for clustering, upgrades, and backups if you can start with PaaS instead of IaaS. Option #2 is also designed for moving on-prem applications to the cloud, because a managed instance has better compatibility with an on-prem SQL Server, but without some of the IaaS and management hassles. For greenfield development, option #3 is the best option for most scenarios.

Once you’ve decided on option 3, there is another two levels of cost and performance options to consider. It’s not so much that Azure SQL is complicated, but Microsoft provides flexibility to cover different business scenarios. For any given Azure SQL instance, you can:

Option #1 is the best option when you manage a single database, or you have a database with unique performance characteristics. A pool is usually better from a cost to performance ratio when you have 3 or more databases.

After you’ve decided to pool, the next decision is to decide how you’ll specify the performance characteristic of the pool. Will you use DTUs? Or will you use vCPUs? DTUs are frustratingly vague, but we do know that 20 DTUs are twice as powerful as 10 DTUs. vCPUs are at least a bit familiar, because we equated CPUs with performance capability for decades.

One significant difference between the DTU model and the vCPU model is that only the vCPU model allows for reserved instances and the “hybrid benefit”. Both of these options can lead to huge cost savings, but both require some business knowledge.

The “hybrid benefit” is the ability to bring your own SQL Server license. The benefit is ideal for moving SQL databases from on-prem to the cloud, because you can make use of a license you already own. Or, perhaps your organization already has a number of free licenses from the Microsoft partner program, or discounted licenses from enterprise agreements.

Reserved instances will save you 21 to 33 percent if you commit to a certain level of provisioning for 1 to 3 years. If you customers sign one year contracts to use your service, a one year reserved instance is a quick cost savings with little risk.

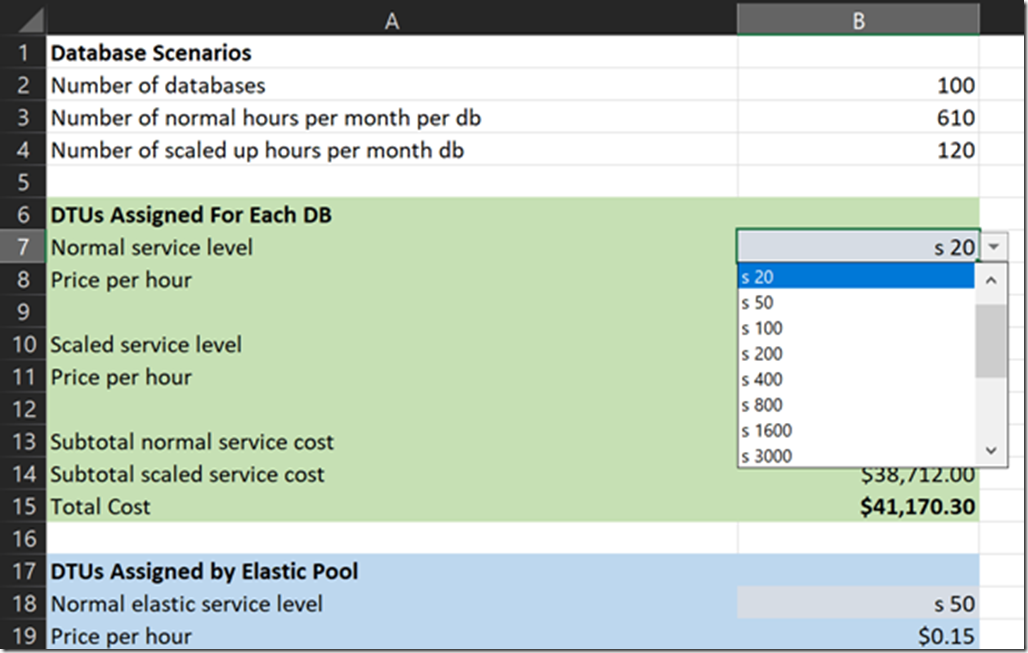

If everything I’ve said so far makes it sound like you could benefit from a using a spread sheet to run hypothetical test, then yes, setting up a spreadsheet does help.

Once you have a plan, you have to enforce the plan and reevaluate the plan as time moves forward. But, logging into the portal and eyeballing resources only works for a small number of resources. If things go as planned, I’ll be blogging about an automated approach over the next few months.

OdeToCode by K. Scott Allen

OdeToCode by K. Scott Allen