In a previous post on using the PageObject pattern with Protractor, Martin asked how much time is wasted writing tests, and who pays for the wasted time?

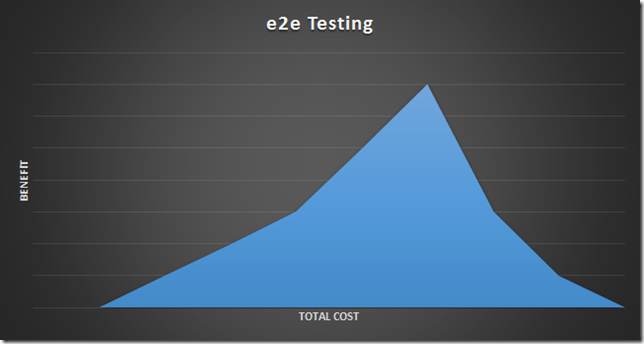

To answer that question I want to think about the costs and benefits of end to end testing. I believe the cost benefit for true end to end testing looks like the following.

There are two significant slopes in the graph. First is the “getting started” slope, which ramps up positively, but slowly. Yes, there are some technical challenges in e2e testing, like learning how to write and debug tests with a new tool or framework. But, what typically slows the benefit growth is organizational. Unlike unit tests, which a developer might write on her own, e2e tests requires coordination and planning across teams both technical and non-technical. You need to plan the provisioning and automation of test environments, and have people create databases with data representative of production data, but scrubbed of protected personal data. Business involvement is crucial, too, as you need to make sure the testing effort is testing the right acceptance criteria for stakeholders.

All of the coordination and work required for e2e testing on a real application is a bit of a hurdle and is sure to build resistance to the effort in many projects. However, the sweet spot at the top of the graph, where the benefit reaches a maximum, is a nice place to be. The tests give everyone confidence that the application is working correctly, and allows teams to create features and deploy at a faster pace. There is a positive return in the investment made in e2e tests. Sure, the test suite might take one hour to run, but it can run any time of the day or night, and every run might save 40 hours of human drudgery.

There is also the ugly side to e2e testing where the benefit starts to slope downward. Although the slope might not always be negative, I do believe the law of diminishing returns is always in play. e2e tests can be amazingly brittle and fail with the slightest change in the system or environment. The failures lead to frustration and it is easy for a test suite to become the villain that everyone despises. I’ve seen this scenario play out when the test strategy is driven with mindless metrics, like when there is a goal to reach 90% code coverage.

In short, every application needs testing before release, and automated e2e tests can speed the process. Making a good test suite that doesn't become a detriment to the project is difficult, unfortunately, due to the complex nature of both software and the human mind. I encourage everyone to write high value tests for the riskier pieces of the application so the tests can catch real errors and build confidence.

OdeToCode by K. Scott Allen

OdeToCode by K. Scott Allen